Climate DT Parameter Series Plot- Data Access using DEDL HDA

This notebook authenticates a user with DestinE services, constructs and submits data requests to the DEDL HDA API for Climate Digital Twin projections, polls for availability, downloads GRIB data for multiple years, and visualizes it using EarthKit.

To search and access DEDL data a DestinE user account is needed

To search and access DT data an upgraded access is needed.

Earthkit and HDA Polytope used in this context are both packages provided by the European Centre for Medium-Range Weather Forecasts (ECMWF).

Climate DT - Parameter Series Plot- Data Access using DEDL HDA¶

Contents¶

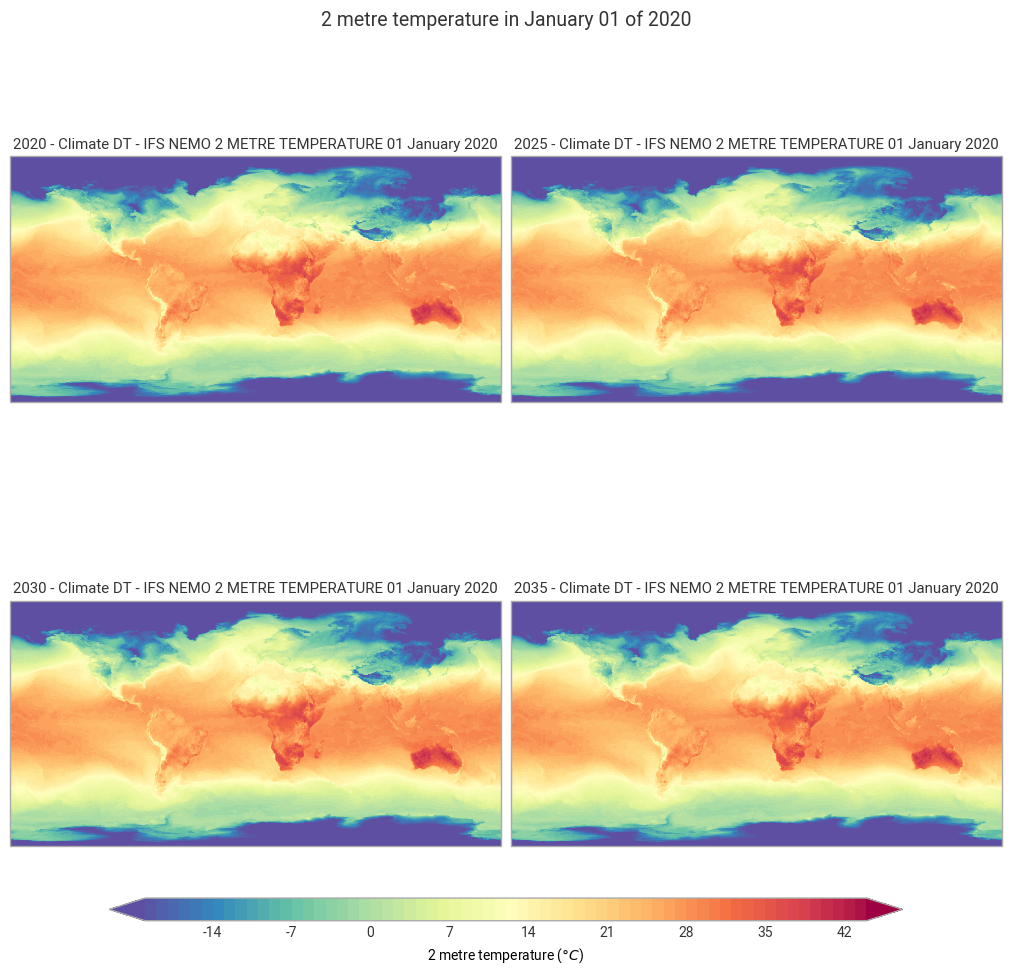

Objective: This notebook has the aim to show how to how to use the HDA (Harmonized Data Access) API to query and access Climate DT data to plot a parameter series.

Data Sources: https://

destine .ecmwf .int /climate -change -adaptation -digital -twin -climate -dt/ Methods: The data request is performed using HDA REST API. The variable used in this notebook is the “2 metre temperature”, the temperature of air at 2m above the surface of land, sea or in-land waters. Below the main steps covered by this tutorial.

Setup: Import the required libraries.

Search: Search for 2 metre temperature.

Order and Download: How to filter and download climate Dt data.

Plot: How to visualize hourly data on single levels data through Earthkit.

Prerequisites:

To search and access DEDL data a DestinE user account is needed

To search and access DT data an upgraded access is needed.

Expected Output:

4 grib file files containing the requested data, deleted in the last cell

4 maps plot of the 2 metre temperature at different years.

Setup¶

pip install --user --quiet --upgrade destinelabNote: you may need to restart the kernel to use updated packages.

Import all the required packages.

import destinelab as deauth

import json

import requests

from requests.adapters import HTTPAdapter

from urllib3.util.retry import Retry

import os

from getpass import getpass

from tqdm import tqdm

import time

from datetime import datetime

from urllib.parse import unquote

from IPython.display import JSON

import ipywidgets as w(DEDL-HDA-EO.ECMWF.DAT.DT_CLIMATE-Series.ipynb-Search-for-2- metre-temperature)=

Search for 2 metre temperature¶

Obtain Authentication Token¶

To access data we need to be authenticated.

Below how to request of an authentication token using the destinelab package.

DESP_USERNAME = input("Please input your DESP username: ")

DESP_PASSWORD = getpass("Please input your DESP password: ")

auth = deauth.AuthHandler(DESP_USERNAME, DESP_PASSWORD)

access_token = auth.get_token()

if access_token is not None:

print("DEDL/DESP Access Token Obtained Successfully")

else:

print("Failed to Obtain DEDL/DESP Access Token")

auth_headers = {"Authorization": f"Bearer {access_token}"}Please input your DESP username: eum-dedl-user

Please input your DESP password: ········

DEDL/DESP Access Token Obtained Successfully

Response code: 200

DEDL/DESP Access Token Obtained Successfully

Check if DT access is granted¶

If DT access is not granted, you will not be able to execute the rest of the notebook.

auth.is_DTaccess_allowed(access_token)TrueDeriving Query Parameters from STAC Metadata¶

The variables available in the selected collection can be retrieved directly from its STAC metadata. Below, we list all parameters along with their relevant information.

HDA Endpoints¶

HDA API is based on the Spatio Temporal Asset Catalog specification (STAC). When accessing DestinE data through the HDA API, it is useful to define a small set of configuration constants upfront. These typically include:

The STAC API endpoint exposed by HDA

The collection name

While the collection name can be specified as a constant, it does not need to be known in advance, as available collections can be discovered dynamically using the discovery API.

HDA_STAC_ENDPOINT="https://hda.data.destination-earth.eu/stac/v2"

print("STAC endpoint: ", HDA_STAC_ENDPOINT)STAC endpoint: https://hda.data.destination-earth.eu/stac/v2

HDA_DISCOVERY_ENDPOINT = HDA_STAC_ENDPOINT+'/collections'

print("HDA discovery endpoint: ", HDA_DISCOVERY_ENDPOINT)HDA discovery endpoint: https://hda.data.destination-earth.eu/stac/v2/collections

HDA Discovery¶

For this example we want to access the Future Projection obtained using the IFS-NEMO model of the Climate Change Adaptation Digital Twin data. To find the right collection ID to use for querying HDA we can use the free text search offered by the HDA Discovery API searching for, e.g., Climate Change Adaptation Digital Twin, Future Projection and IFS-NEMO: HDA Discovery API

The result of this operation will give us the collection ID and some other useful information like the temporal extent and the available parameters.

discovery_json=(requests.get(HDA_DISCOVERY_ENDPOINT,params = {"q": '"Climate Change Adaptation Digital Twin","Future Projection","IFS-NEMO"'}).json())

print("The discovery result give us:\nthe collection ID : ", discovery_json["collections"][0].get("id"))

print("\nIts time extension : ", discovery_json["collections"][0].get("extent").get("temporal").get("interval"))

#print("\nThe available parameters: ", discovery_json["collections"][0].get("cube:variables").keys())

parameters=discovery_json["collections"][0]["cube:variables"]

print("\nThe available parameters: ")

keys = sorted(parameters)

print(json.dumps(keys, indent=2))

COLLECTION_ID = discovery_json["collections"][0].get("id")

TARGET_COLLECTION_ID ="EO.ECMWF.DAT.D1.DT_CLIMATE.G1.SCENARIOMIP_SSP3-7.0_IFS-NEMO.R1"

if COLLECTION_ID != TARGET_COLLECTION_ID:

print("Target collection not found in discovery response.")

The discovery result give us:

the collection ID : EO.ECMWF.DAT.D1.DT_CLIMATE.G1.SCENARIOMIP_SSP3-7.0_IFS-NEMO.R1

Its time extension : [['2020-01-01T00:00:00Z', '2039-12-31T23:59:59Z']]

The available parameters:

[

"100_metre_U_wind_component(hl)",

"100_metre_V_wind_component(hl)",

"10_metre_U_wind_component(sfc)",

"10_metre_V_wind_component(sfc)",

"2_metre_dewpoint_temperature(sfc)",

"2_metre_temperature(sfc)",

"Boundary_layer_height(sfc)",

"Charnock(sfc)",

"Evaporation(sfc)",

"Geopotential(pl)",

"Geopotential(sfc)",

"High_cloud_cover(sfc)",

"Land-sea_mask(sfc)",

"Low_cloud_cover(sfc)",

"Mean_sea_level_pressure(sfc)",

"Medium_cloud_cover(sfc)",

"Potential_vorticity(pl)",

"Relative_humidity(pl)",

"Skin_temperature(sfc)",

"Snow_depth(sfc)",

"Snow_depth_water_equivalent(sol)",

"Snowfall(sfc)",

"Specific_cloud_liquid_water_content(pl)",

"Specific_humidity(pl)",

"Sub-surface_runoff(sfc)",

"Surface_long-wave_(thermal)_radiation_downwards(sfc)",

"Surface_net_long-wave_(thermal)_radiation(sfc)",

"Surface_net_short-wave_(solar)_radiation(sfc)",

"Surface_pressure(sfc)",

"Surface_runoff(sfc)",

"Surface_short-wave_(solar)_radiation_downwards(sfc)",

"TOA_incident_short-wave_(solar)_radiation(sfc)",

"Temperature(pl)",

"Time-integrated_eastward_turbulent_surface_stress(sfc)",

"Time-integrated_northward_turbulent_surface_stress(sfc)",

"Time-integrated_surface_latent_heat_net_flux(sfc)",

"Time-integrated_surface_sensible_heat_net_flux(sfc)",

"Time-mean_X-component_of_sea_ice_velocity(o2d)",

"Time-mean_Y-component_of_sea_ice_velocity(o2d)",

"Time-mean_eastward_sea_ice_velocity(o2d)",

"Time-mean_eastward_sea_water_velocity(o3d)",

"Time-mean_northward_sea_ice_velocity(o2d)",

"Time-mean_northward_sea_water_velocity(o3d)",

"Time-mean_sea_ice_area_fraction(o2d)",

"Time-mean_sea_ice_thickness(o2d)",

"Time-mean_sea_ice_volume_per_unit_area(o2d)",

"Time-mean_sea_surface_height(o2d)",

"Time-mean_sea_surface_practical_salinity(o2d)",

"Time-mean_sea_surface_temperature(o2d)",

"Time-mean_sea_water_potential_temperature(o3d)",

"Time-mean_sea_water_practical_salinity(o3d)",

"Time-mean_snow_volume_over_sea_ice_per_unit_area(o2d)",

"Time-mean_upward_sea_water_velocity(o3d)",

"Time-mean_vertically-integrated_heat_content_in_the_upper_300_m(o2d)",

"Time-mean_vertically-integrated_heat_content_in_the_upper_700_m(o2d)",

"Top_net_long-wave_(thermal)_radiation(sfc)",

"Top_net_short-wave_(solar)_radiation(sfc)",

"Total_cloud_cover(sfc)",

"Total_column_cloud_ice_water(sfc)",

"Total_column_cloud_liquid_water(sfc)",

"Total_column_vertically-integrated_water_vapour(sfc)",

"Total_precipitation(sfc)",

"Total_precipitation_rate(sfc)",

"U_component_of_wind(pl)",

"V_component_of_wind(pl)",

"Vertical_velocity(pl)"

]

From the list of available parameters, we select 2_metre_temperature at the surface level (levtype = sfc).

Using the metadata, we can extract the information required to formulate the HDA query and retrieve the desired product.

for var_name, var_info in parameters.items():

if var_name=="2_metre_temperature(sfc)":

var_type = var_info.get("type")

var_unit = var_info.get("unit")

var_url = var_info.get("attrs").get("url")

two_metre_temperature = {

"type": var_type,

"unit": var_unit,

"long_name": var_info.get("attrs").get("long_name"),

"shortName": var_info.get("attrs").get("shortName"),

"standard_name": var_info.get("attrs").get("standard_name"),

"url": var_url,

"parameter_ID": var_info.get("attrs").get("parameter_ID"),

"product_type": var_info.get("attrs").get("product_type"),

"levtype": var_info.get("attrs").get("levtype"),

"levelist": var_info.get("attrs").get("levelist"),

"time": var_info.get("attrs").get("time")

}

two_metre_temperature{'type': 'data',

'unit': 'K',

'long_name': '2 metre temperature',

'shortName': '2t',

'standard_name': '2_metre_temperature',

'url': 'https://codes.ecmwf.int/grib/param-db/167',

'parameter_ID': '167',

'product_type': 'forecast',

'levtype': 'sfc',

'levelist': '',

'time': 'Hourly'}response = requests.post(HDA_STAC_ENDPOINT+"/search", headers=auth_headers, json={

"collections": [COLLECTION_ID],

"datetime": "2020-01-01T00:00:00Z",

"query": {

"ecmwf:resolution":{"eq": "high"},

"ecmwf:levtype":{"eq": two_metre_temperature["levtype"]},

"ecmwf:time":{"eq": ["1200"]},

"ecmwf:param":{"eq": [two_metre_temperature["parameter_ID"]]}

}

})if(response.status_code!= 200):

(print(response.text))

response.raise_for_status()

product = response.json()["features"][0]

JSON(product)Order and Download¶

We would like to obtain the temperature in a certain month and day each 5 years, considering the time extension of our collection — which span 2020 to 2039 — to request data for a specific month and day.

From the previous search result we can retrieve the precise body and URL needed to order and download the data.

To retrieve data, you must first submit an order using the retrievied body. Each order is processed asynchronously; once a product becomes available, it can be downloaded. The code below iterates through the collection extension years and submits one data request per year (same day and month).

link = next((l for l in product.get('links', []) if l.get("rel") == "retrieve"), None)

if link:

href = link.get("href")

body = link.get("body") # optional: depends on extension

print("order endpoint:", href)

print("order body, same as the polytope format:")

print(json.dumps(body, indent=4))

else:

print(f"No link with rel='{target_rel}' found")

order endpoint: https://hda.data.destination-earth.eu/stac/v2/collections/EO.ECMWF.DAT.D1.DT_CLIMATE.G1.SCENARIOMIP_SSP3-7.0_IFS-NEMO.R1/order

order body, same as the polytope format:

{

"activity": "ScenarioMIP",

"class": "d1",

"dataset": "climate-dt",

"date": "20200101/to/20200101",

"experiment": "SSP3-7.0",

"expver": "0001",

"generation": "1",

"levtype": "sfc",

"model": "IFS-NEMO",

"param": [

"167"

],

"realization": "1",

"resolution": "high",

"stream": "clte",

"time": [

"1200"

],

"type": "fc"

}

To loop on years we need to modify the date in the order body amd then order and download.

#Sometimes requests to polytope get timeouts, it is then convenient define a retry strategy

retry_strategy = Retry(

total=5, # Total number of retries

status_forcelist=[500, 502, 503, 504], # List of 5xx status codes to retry on

allowed_methods=["GET",'POST'], # Methods to retry

backoff_factor=1 # Wait time between retries (exponential backoff)

)

# Create an adapter with the retry strategy

adapter = HTTPAdapter(max_retries=retry_strategy)

# Create a session and mount the adapter

session = requests.Session()

session.mount("https://", adapter)

# Initialize a list to store filenames

filenames = []

# Define start and end years

start_year = 2020

end_year = 2039

dates=[]

# Loop

for year in range(start_year, end_year+1,5):

# Create a datetime object

obsdate = datetime(year, 7, 31)

datechoice = obsdate.strftime("%Y%m%d")

dates.append(datechoice)

dates['20200731', '20250731', '20300731', '20350731']from concurrent.futures import ThreadPoolExecutor, as_completed

#timeout and step for polling (sec)

TIMEOUT = 300

STEP = 1

ONLINE_STATUS = "online"

def download_date(date):

response = session.post(href, json=body, headers=auth_headers)

if response.status_code != 200:

print(response.content)

response.raise_for_status()

ordered_item = response.json()

product_id = ordered_item["id"]

storage_tier = ordered_item["properties"].get("storage:tier", "online")

order_status = ordered_item["properties"].get("order:status", "unknown")

federation_backend = ordered_item["properties"].get("federation:backends", [None])[0]

print(f"Product ordered: {product_id}")

print(f"Provider: {federation_backend}")

print(f"Storage tier: {storage_tier} (product must have storage tier \"online\" to be downloadable)")

print(f"Order status: {order_status}")

self_url = f"{HDA_STAC_ENDPOINT}/collections/{COLLECTION_ID}/items/{product_id}"

item = {}

for i in range(0, TIMEOUT, STEP):

print(f"Polling {i + 1}/{TIMEOUT // STEP}")

response = session.get(self_url, headers=auth_headers)

if response.status_code != 200:

print(response.content)

response.raise_for_status()

item = response.json()

storage_tier = item["properties"].get("storage:tier", ONLINE_STATUS)

if storage_tier == ONLINE_STATUS:

download_url = item["assets"]["downloadLink"]["href"]

print("Product is ready to be downloaded.")

print(f"Asset URL: {download_url}")

break

time.sleep(STEP)

else:

order_status = item["properties"].get("order:status", "unknown")

print(f"We could not download the product after {TIMEOUT // STEP} tries. Current order status is {order_status}")

response = session.get(download_url, stream=True, headers=auth_headers)

response.raise_for_status()

content_disposition = response.headers.get('Content-Disposition')

total_size = int(response.headers.get("content-length", 0))

if content_disposition:

filename = content_disposition.split('filename=')[1].split('"')[1]

else:

filename = os.path.basename(url)

filenames.append(filename)

# Open a local file in binary write mode and write the content

print(f"downloading {filename}")

with tqdm(total=total_size, unit="B", unit_scale=True) as progress_bar:

with open(filename, 'wb') as f:

for data in response.iter_content(1024):

progress_bar.update(len(data))

f.write(data)

result=filename+' NOT'

if (os.path.exists(filename)):

result=filename

return result

# Run downloads in parallel

with ThreadPoolExecutor(max_workers=4) as executor:

futures = [executor.submit(download_date, d) for d in dates]

for future in as_completed(futures):

result = future.result()

print(result," downloaded")Product ordered: 2d35c1fe-3fd9-4d51-9d9b-798873acca0b

Provider: dedt_lumi

Storage tier: offline (product must have storage tier "online" to be downloadable)

Order status: ordered

Polling 1/300

Product ordered: 6b21314c-6f82-4932-b5c6-6fc263f3f0eb

Provider: dedt_lumi

Storage tier: offline (product must have storage tier "online" to be downloadable)

Order status: ordered

Polling 1/300

Product ordered: e5856884-b168-4105-9700-8926c0153c36

Provider: dedt_lumi

Storage tier: offline (product must have storage tier "online" to be downloadable)

Order status: ordered

Polling 1/300

Polling 2/300

Product ordered: 8d0dc42c-f30d-4590-b572-120b22f7b29d

Provider: dedt_lumi

Storage tier: offline (product must have storage tier "online" to be downloadable)

Order status: ordered

Polling 1/300

Polling 2/300

Polling 2/300

Polling 3/300

Polling 3/300

Polling 3/300

Product is ready to be downloaded.

Asset URL: https://hda-download.lumi.data.destination-earth.eu/data/dedt_lumi/EO.ECMWF.DAT.D1.DT_CLIMATE.G1.SCENARIOMIP_SSP3-7.0_IFS-NEMO.R1/e5856884-b168-4105-9700-8926c0153c36/downloadLink

Polling 2/300

downloading e5856884-b168-4105-9700-8926c0153c36.grib

11.3MB [00:00, 45.9MB/s]Product is ready to be downloaded.

Asset URL: https://hda-download.lumi.data.destination-earth.eu/data/dedt_lumi/EO.ECMWF.DAT.D1.DT_CLIMATE.G1.SCENARIOMIP_SSP3-7.0_IFS-NEMO.R1/8d0dc42c-f30d-4590-b572-120b22f7b29d/downloadLink

Product is ready to be downloaded.

Asset URL: https://hda-download.leonardo.data.destination-earth.eu/data/dedt_lumi/EO.ECMWF.DAT.D1.DT_CLIMATE.G1.SCENARIOMIP_SSP3-7.0_IFS-NEMO.R1/2d35c1fe-3fd9-4d51-9d9b-798873acca0b/downloadLink

26.1MB [00:00, 55.2MB/s]

Polling 4/300

e5856884-b168-4105-9700-8926c0153c36.grib downloaded

downloading 8d0dc42c-f30d-4590-b572-120b22f7b29d.grib

26.1MB [00:00, 53.4MB/s]

8d0dc42c-f30d-4590-b572-120b22f7b29d.grib downloaded

downloading 2d35c1fe-3fd9-4d51-9d9b-798873acca0b.grib

8.99MB [00:00, 29.6MB/s]Product is ready to be downloaded.

Asset URL: https://hda-download.leonardo.data.destination-earth.eu/data/dedt_lumi/EO.ECMWF.DAT.D1.DT_CLIMATE.G1.SCENARIOMIP_SSP3-7.0_IFS-NEMO.R1/6b21314c-6f82-4932-b5c6-6fc263f3f0eb/downloadLink

26.1MB [00:00, 33.7MB/s]

2d35c1fe-3fd9-4d51-9d9b-798873acca0b.grib downloaded

downloading 6b21314c-6f82-4932-b5c6-6fc263f3f0eb.grib

26.1MB [00:01, 13.5MB/s]6b21314c-6f82-4932-b5c6-6fc263f3f0eb.grib downloaded

Poll the API until product is ready¶

We request the product itself to get an update of its status.

EarthKit¶

Using EarthKit, we can load the requested datasets and visualize them directly, making it easy to inspect the results and explore the data.

import earthkit.data

import earthkit.plots# Iterate over filenames

data=[]

for filename in filenames:

data.append( earthkit.data.from_source("file", filename))

STYLE = earthkit.plots.styles.Style(

colors="Spectral_r",

levels=range(-20, 45),

units="celsius",

# Extend the colorbar at both ends

extend="both",

)figure = earthkit.plots.Figure(rows=2, columns=2, size=(10, 10))

for i, year in enumerate(range(start_year, end_year,5)):

subplot = figure.add_map()

subplot.contourf(data[i], style=STYLE)

subplot.title(f"{year} - Climate DT - IFS NEMO {{short_name!u}} {{time:%d %B %Y}}")

figure.title("{variable_name} in {time:%B %d} of {time:%Y}", fontsize=14)

figure.legend(label="{variable_name!l} ({units})")

figure.show()Regrid specs: in_grid={'grid': 'H1024', 'ordering': 'nested'}, out_grid={'grid': [0.1, 0.1]}

Regrid specs: in_grid={'grid': 'H1024', 'ordering': 'nested'}, out_grid={'grid': [0.1, 0.1]}

Regrid specs: in_grid={'grid': 'H1024', 'ordering': 'nested'}, out_grid={'grid': [0.1, 0.1]}

Regrid specs: in_grid={'grid': 'H1024', 'ordering': 'nested'}, out_grid={'grid': [0.1, 0.1]}

Cleanup¶

Let’s now remove the downloaded files

for filename in filenames:

os.remove(filename)